Since the release of ChatGPT in late 2022, it’s hard to go anywhere without hearing about AI. While AI wasn’t invented in 2022, the release of ChatGPT marked a fundamental shift in how society thinks about AI and what it’s capable of. And although AI has been used in many applications long before ChatGPT’s release, mostly through the use of machine learning (ML) models, ChatGPT brought a new type of model to the public: the large language model (LLM).

Since then, new versions of LLMs continue to show improved performance, and new products have come to market to leverage LLMs for much more than just simple text generation. AI agents are an example of such an application, where LLMs are leveraged to make decisions or take actions on behalf of humans. It wouldn’t seem unrealistic that these AI agents might be responsible in the future for determining whether to approve a customer for a credit product.

However, banks have used AI for years to approve or decline credit applications through traditional machine learning models like regressions and gradient boosting machines (GBMs). It’s natural to wonder how these traditional approaches compare to an LLM-based approach. Will LLMs supersede the current standard for credit risk decision making?

Early Performance Data of LLMs as Prediction Models

Let’s focus on GBMs as the main point of performance comparison to LLMs, as they are generally one of the highest performing models for credit risk classification tasks (determining how likely a borrower is to pay back their loan). LLMs and GBMs are very different models. LLMs are generalizable models able to ingest and produce textual data. In contrast, GBMs work with tabular data (i.e., rows and columns) and require pre-training on an extensive domain-specific dataset to produce a customized model for a specific use case.

Credit application data, as well as other financial data like transaction data, is typically provided in a tabular format, rather than natural language description, so GBM models are a natural fit for this type of data when compared with LLMs. Despite these differences, multiple academic papers have evaluated the performance of LLMs against GBMs for credit risk assessment on publicly available datasets.

A June 2025 paper by Qizhao Chen published in the Journal of Computer and Communication compared the performance of a traditional GBM model and other traditional ML models (e.g., random forest, support vector machine, MLP neural network) to an LLM by converting each row of data into a textual prompt for the LLM.

Key Evaluation Metrics for Classification Models

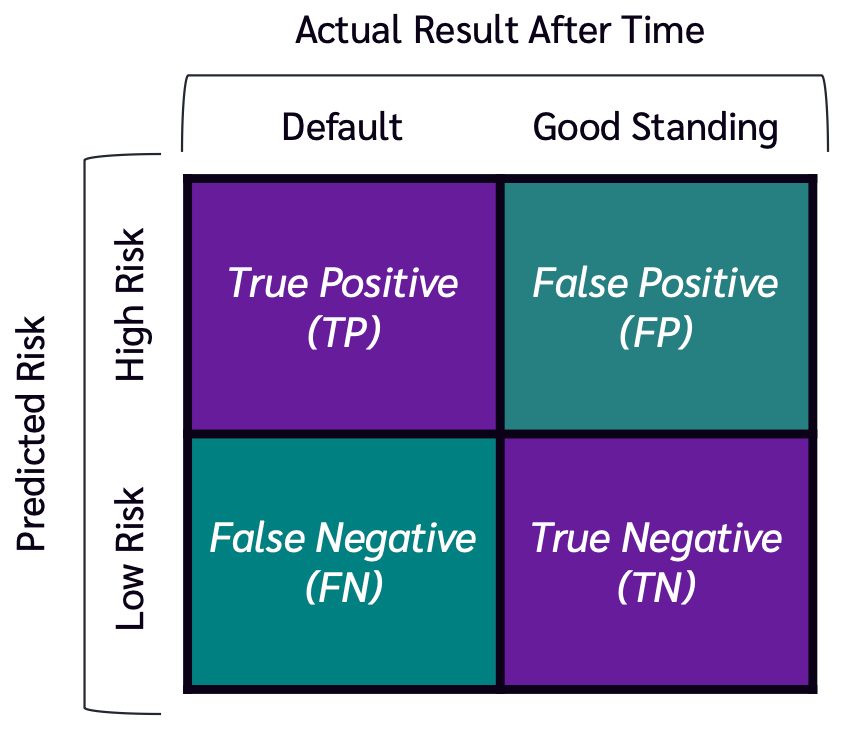

While the LLM had high precision, meaning that it identified few false positives, its recall was poor, meaning that it was unable to identify many true positive cases. This poor recall led to the LLM performing worse overall than all other models tested. A similar paper from October 2025 (AlMarri et al.) confirmed the same result using a different dataset: all LLMs tested underperformed the GBM model when assessing credit risk. That being said, the LLM did perform better than random guessing, showing that it does have some predictive ability.

The authors also tested whether the GBM and LLM used together in an ensemble model would improve predictions over using the GBM alone, but the ensemble model performed worse than using the GBM in isolation. In summary, LLMs could potentially be useful in cases where training a GBM would be impractical, such as in cases of extremely limited historical data. But otherwise, the GBM model alone seems to lead to better performance.

Further Considerations

In addition to lagging performance relative to established model paradigms, LLMs present challenges in terms of compute cost and model explainability. Compute cost and time are key concerns when considering using LLMs. A 2023 study (Hegselmann et al.) on using LLMs with various tabular datasets noted that, compared to traditional models, the LLM consumed more computational resources for both training and inference. Especially in live environments such as instant credit approval or transaction fraud decisioning scenarios, this is an important consideration and in some cases may make deployment of LLMs impractical or cost prohibitive.

Model explainability is another key concern in financial contexts. Financial institutions often need to be able to explain why decisions were made to meet regulatory requirements. In Canada, OSFI’s Guideline E-23 sets out model explainability requirements from a model risk management perspective. In the US, the Equal Credit Opportunity Act requires lenders to be able to state specific reasons for declining an applicant’s request for credit. The October 2025 paper mentioned earlier also explored feature importance, and tested the LLM’s ability to explain its rationale behind its predictions.

In one case, the observed feature importance using a standard feature importance tool did not align with the LLM’s stated interpretation of that feature. This raises concerns about whether LLMs are able to consistently interpret features in a transparent way. If they’re not able to do so, they may not be able to meet regulatory requirements for explainability.

The Future of LLMs in Lending

Given the current performance of LLMs for classification on structured data, they likely aren’t the best tool for most automated decisioning in the lending industry for the time being. Traditional GBM models are able to perform as well or better while using less computational resources and having more established explainability tools. However, that doesn’t mean there aren’t areas that LLMs can help.

Use of LLM-based tools like Claude Code and Claude in Excel, as well as standard chatbots like Gemini, can help analysts work quicker when training, deploying and monitoring traditional risk models and NPV models, including leveraging the LLM for data cleaning tasks where data is unstructured.

Reducing the time it takes to build these models can help institutions improve model performance through better monitoring, more frequent regrounding on fresh data, and quicker integration of new features.

Additionally, initial research does show some promise for LLMs in cases where existing data is extremely sparse due to their ability to make inferences without domain-specific training data. A recent paper also showed promise using LLMs as “translators” to explain in plain language feature importance from established feature explanation tools with highly technical outputs. As an example use case, this could help enable better decline explanations, creating a better customer experience for applicants.

How Payson Can Help

At Payson Solutions, we have helped lenders build machine learning and AI solutions for risk assessment, fraud detection and more. If you’re interested in better understanding how to apply AI in your business, please reach out. We’d be happy to connect.